Award-winning PDF software

Notice Of Disagreement Example Form: What You Should Know

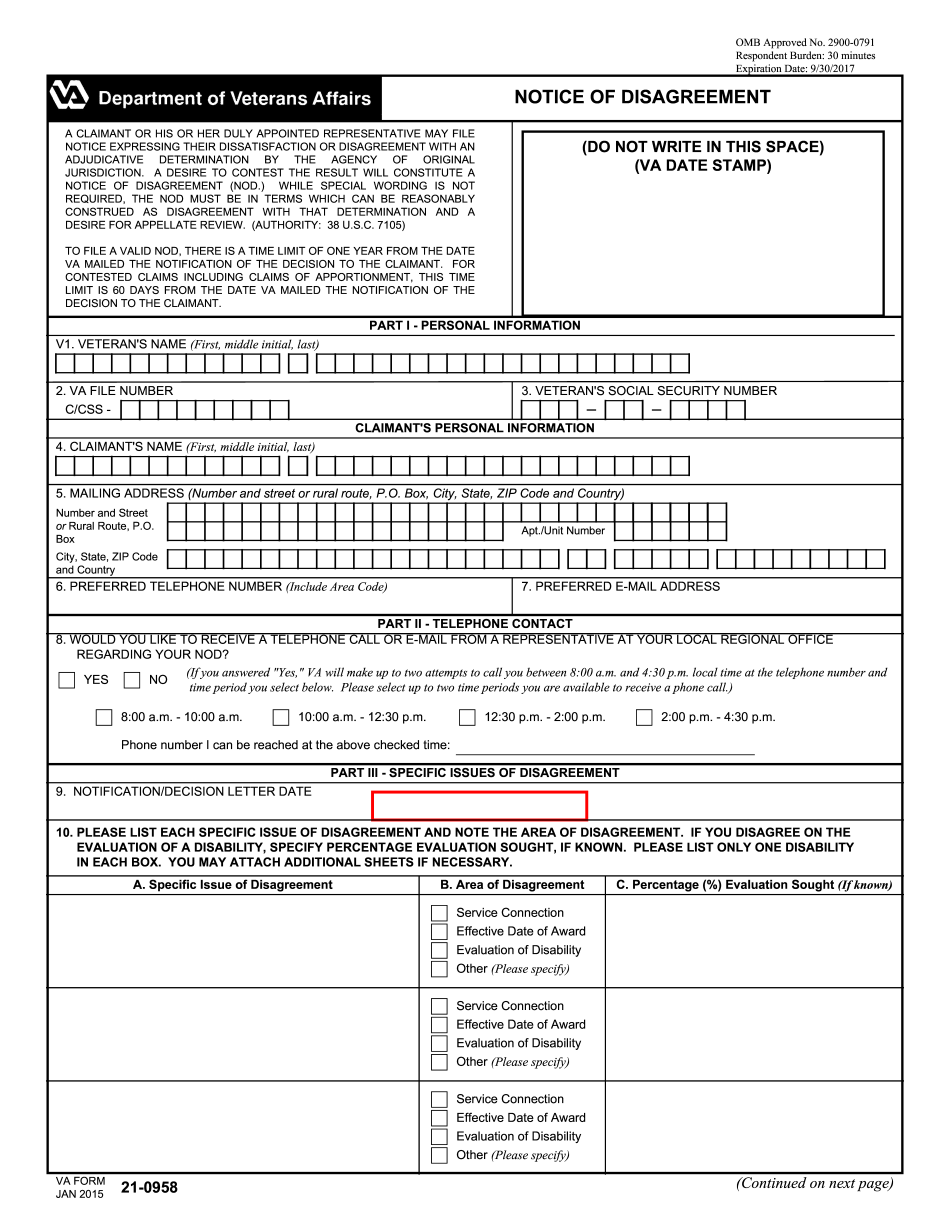

This form is the only legal document you If your decision did not include an appeal, and you wish to appeal, use this form. NOTE: this form is only used for those claims for disability compensation that were decided on FEBRUARY 19, 2019. This form applies only to those claims for mental and physical disabilities that were The following information must be filled out to initiate an appeal: Name and address E-mail address E-mail address of parent(s) (who will not get this letter) If you have received a disability claim, and you disagree with the application of a rating formula, the VA form (called a “Criteria Based Disability Rating”), please complete an appeal Please sign this form if you agree to be contacted by the VA regarding an appeal. If you want your veteran-eligible claim rated on the basis of your own individual evaluation of each criterion (physical, psychiatric, developmental, hearing, and intellectual) and not your RO's opinion, please complete an If you want your claim rated on the basis of your own individual evaluation of each factor and the rating of the RO using the same factors, in addition to the ratings the RO The following information is required in addition to the required information below to process an appeal: If you are not rated by your RO, DO NOT sign below. If you want your claim rated by any of the rating experts, DO NOT sign below. If you have completed the above checklist and are still disagreeing with the current decision, please contact [email protected] and tell them If you do not receive a response after several attempts, it may indicate something is wrong with your Veteran's Record (VA Form 13-0050). In that case contact [email protected] You may send one copy ONLY as a “Notice of Disagreement” or a “Notice of Disagreement of VA Form 13-0050” to If you have been rated in the past, DO NOT sign below.

Online solutions help you to manage your record administration along with raise the efficiency of the workflows. Stick to the fast guide to do 2015-2025 Va 21-0958, steer clear of blunders along with furnish it in a timely manner:

How to complete any 2015-2025 Va 21-0958 online: - On the site with all the document, click on Begin immediately along with complete for the editor.

- Use your indications to submit established track record areas.

- Add your own info and speak to data.

- Make sure that you enter correct details and numbers throughout suitable areas.

- Very carefully confirm the content of the form as well as grammar along with punctuational.

- Navigate to Support area when you have questions or perhaps handle our assistance team.

- Place an electronic digital unique in your 2015-2025 Va 21-0958 by using Sign Device.

- After the form is fully gone, media Completed.

- Deliver the particular prepared document by way of electronic mail or facsimile, art print it out or perhaps reduce the gadget.

PDF editor permits you to help make changes to your 2015-2025 Va 21-0958 from the internet connected gadget, personalize it based on your requirements, indicator this in electronic format and also disperse differently.